(Article changed on January 16, 2013 at 18:14)

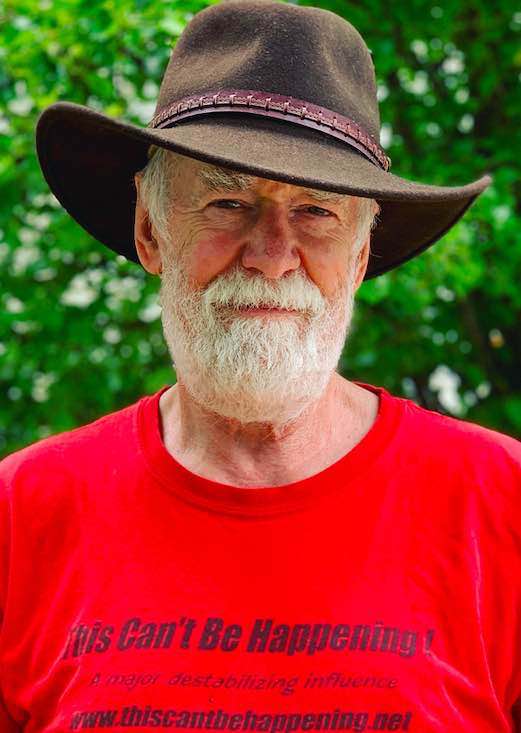

By Dave Lindorff

What is wrong with America?

Big question.

Simple answer.

We Americans have completely lost any sense of why a country, a society, and a government exist.

Politicians, lobbyists and corporate media talking heads, and far too many ordinary people, have accepted and are promoting as gospel the circular notion that it's important to encourage business to grow so that people will be hired and the economy can grow. This specious argument is used to justify the weakening labor unions, the raising of taxes on workers while they are cut for companies and the rich, the cutting of earned benefit programs like Social Security and Medicare, the gutting worker safety and environmental safety regulations, and the elimination of regulation of activities like banking, corporate mergers and takeovers, pharmaceutical companies etc. In fact every government action that results in making life harder or more dangerous for ordinary working people or for the poor is defended on the basis that it is necessary so that business can make more profit and help the economy to grow.

Growing the economy, however, is not, or certainly should not, be the reason we have government, the reason we are a country, or the reason we are a society.

(Note: You can view every article as one long page if you sign up as an Advocate Member, or higher).